Beam me up

We all understand how a star trek transporter works. Which is surprising since it’s not real and I don’t even understand how my toaster oven works. But, basically your body is broken up into a bazillion tiny pieces, those pieces are sent down to a planet surface, and then reassembled. That’s sort of the way we build software in an Agile context.

If you’re reading this you’re likely using a process like Scrum or some variation of an Agile process. If you are, you likely take an idea for a whole feature or capability and break it into lots of little stories or backlog items – items small to enough to build in a couple days. But, likely not a bazillion. You’ll build those little things until you can assemble them into the feature you actually release so users can use it.

It usually takes many backlog items to create a releasable feature

You’ll recall from previous stuff I’ve written and said that you’ll only get the outcome, or the value, when you release the feature and users can then see, try, use, and keep using the feature. Half a feature in your product is about as valuable as half a Spock on the planet surface. We’ll need it all.

You’ll only see the outcome when the whole feature is released.

Keep track of whole features

If you’re on a product team, you’ll need to keep track of those whole features, since that’s where the value really comes from. Just like Scotty needs to keep track of the whole Spock. But, many of the agile teams I’ve worked with lose track of them. They fail to reflect on how long it actually took to build the whole feature, the quality of the whole feature, and most importantly the actual outcome observed after the feature is released. Imagine if Scotty only kept track of the velocity particles went to the planet surface but not whether Spock actually made it or not?

Product teams must reflect on the actual effort, actual quality, and actual outcome for everything they ship.

Recipe: Visualizing actual effort and outcome

Every two weeks at your team retrospective, reflect on actual effort and outcome. Add this practice into your Agile process, or, invent a better one and tell me about it.

The next 5 steps are my starting recipe for visualizing actual effort and outcome. Like all good cooks you’ll tailor the recipe to fit your tastes.

0. Create your tracking board

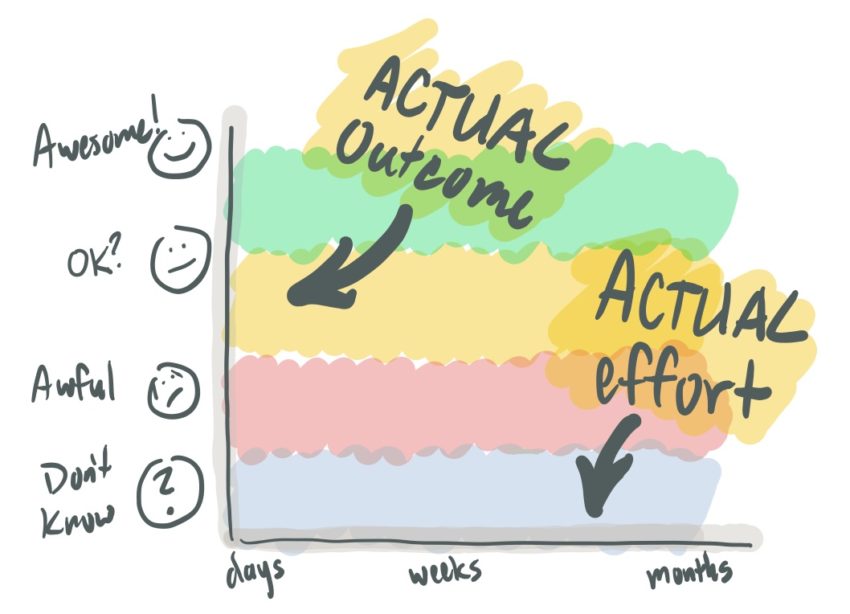

Create a simple 2-dimensional visualization showing the relationship between actual effort to actual outcome. It should look something like this.

If you’re actually working in an office, create it on a wall. Post-covid, lots of you are likely using collaborative tools like Mural or Miro. Create a board like this there. If you have a board you normally use for team activities like retrospectives, create it there.

1. Identify features finished during this cycle

Ask: have we released any completed, ready to use features?

Write that feature name on a sticky note. Write the approximate date it was finished. You’ll want to know how long you’ve been tracking it. It’s going to be a while. Trust me.

2. Discuss actual effort and quality

Start by discussing the actual effort

How long did the whole feature take? Just roughly. Did it take days? Weeks? Months? Quarters?

How long did it take relative to what you’d originally predicted? If it took longer than expected, it might be good to reflect on the reasons why.

If it took more time than expected, mark it as challenged.

Now discuss finished quality

I’ll ask teams to consider three aspects of quality. These are subjective evaluations. Your team can get a feel for how they’d rate these quality aspects by just asking everyone for a 1-5 score. Ask them to vote by holding up some number of features for each of these three aspects.

- User Experience Quality: does the feature look and feel good to use? Is it consistent with the quality of other parts of your product?

- Functional Quality: Does the feature perform as expected? Does it have bugs? Maybe even bugs you haven’t yet found? How confident are you that it’ll perform well in production?

- Code Quality: How was the quality of the code written in this feature? Is it strong and easy to improve? Or, have you just added more technical debt into your product?

Write these rough quality evaluations on the sticky.

Tag challenged features

If the feature felt “challenged”, based on how long it took, the quality you finished with or both, mark it clearly as “challenged.” I just mark it with a red pen or a colored dot. It’ll be interesting to watch how these challenged features do when they enter the real world.

3. Place the feature feature on your tracking board

Organize these finished features left-to-right based on their actual effort. You’ll put them at the bottom row of this visualization in a row labeled “Don’t know.” It should look something like this.

Now you wait.

You won’t be able to evaluate actual outcomes until the feature releases and you begin to get data or feedback from users. You might discuss how exactly you might learn about the outcomes. Sometimes you’ll hatch a plan to check in with key customers to learn how it’s going or observe their use. Sometimes it’s here you realize that you really should have instrumented the product to measure use and give you insights on who’s using it and how often.

If you’re doing this every two weeks, maybe something you put on the board a few weeks ago has some outcomes you can talk about. So, move on to the next step.

4. Discuss actual outcomes

Let me give you a bit of reminder here:

Outcomes are what your users do, say, and feel

If everyone is congratulating you on finishing all this work on time… If your business stakeholders love it… If you held a launch party and everyone got t-shirts… These things are all terrific things, and you can be proud. But, they are not outcomes.

To evaluate the outcome you’ll need to ask questions like these about each feature:

- Did our users discover and easily learn to use the feature?

- Do our target users actually use it as much as we expected they would?

- For internal or B2B products: Do they get more work done faster? (efficiency) Do they do better work or make fewer mistakes? (effectiveness)

- When asked, do they say good things about this feature?

All these evaluations will be based on your team’s expectations before you released the feature. If you didn’t have any, then you might consider discussing your target outcomes before releasing a feature next time.

Scan the features in the “don’t know” row:

Look for features where you now know something about the outcome. Especially look at features that have been there a long time without knowing the outcome.

If you know something about the actual outcome, discuss it. Then move the feature up out of “don’t know” and place it somewhere in the awful to awesome continuum.

For extra credit you may want to note the date you moved it. This is the time it took to get to an outcome. Knowing the average time it takes is useful information.

Scan features elsewhere on the board:

Discuss changes to features you’ve already released.

- Do you have new information about the outcomes that would change your earlier evaluation for a feature?

- Have you released small changes or improvements that would change your evaluation?

For any existing features where the outcome has changed, move it up or down the awful to awesome continuum.

5. Improve your product based on what you’ve learned

The goal of good product development isn’t to just build more stuff faster, it’s to build better, more valuable things. Now that you’ve discussed actual outcomes you have some decisions to make.

Should you improve features that could be more valuable?

Should you remove or replace features that aren’t valuable?

Decide on any actions you’d like to take. You’ll likely need to create a backlog item for this new work.

The minute you start doing things like this you’ve switched over from delivering software to managing a product. Congratulations!

6. Reflect on what what you’ve learned

You’ve just evaluated actual effort, quality, and outcome. Now it’s time to reflect a bit on how you’re doing things and how you might improve the way you’re working.

Discuss better ways to measure outcomes

For most teams new to this way of working, getting data on outcomes can be challenging.

Discuss ways to improve outcomes

You may see some recurring problems with features and their outcomes. Are there things you should change in your process that would improve outcomes?

Look at your ratios

Size ratios: where do features fall on the small to large continuum? Remember:

Lots of small features released continuously ultimately improves ROI and reduces risk.

In the future, should you take steps to find smaller successful versions of the features you’ve released?

Ratios of awesome to awful: What percentage of features fall in awesome, meh’, and awful?

If a third of the features you release perform as well as you’d hoped, you’re doing very well.

Most features will take some iteration to get to awesome. Some may never be as awesome as you’d expected they would. The fact that most features arent’t as valuable as you’d expect may come as a shock to you.

Should you take steps to improve the outcomes of features before they’re released?

Look at the correlations or the absence of correlation

Effort vs. challenged or on time: You’ll likely notice that challenged items usually took more effort. That’s partly because being challenged resulted in taking longer. And, it’s partly becaause larget things are usually more likely to have hidden complexity. It’s a good case for breaking things down to smaller successful releases.

Effort vs. good outcome: You’ll likely notice no correlation between how long something took and it’s outcome. Small things are just ask likely to have a good outcome as large things. Is that the case for your product?

Challenged items vs. outcome: You’ll likely notice little or no correlation between those items marked as “challenged” and their outcomes. Challenged items are usually just as likely to have good outcomes as those that weren’t challenged. This should be liberating news. If you were worried about the fact that something took far longer than it should have, you may find that it’s just as valuable as you’d hoped despite that. So, you can feel a little less bad about taking too long. Worry more about those features you finished on time that have bad outcomes. They were the real waste of effort.

Seed the board by evaluating features you’ve already built

When you first create this board seed it by identifying the features you’ve shipped over the past quarter or two. Organize them by effort and outcome. When working with new product teams I’ll always ask them to do this. When those features are on the board, you could do a little reflection on them – steps 5 and 6 above.

Disclaimer and request for evidence

Like all good cooks you’ll tailor the recipe to fit your tastes. Maybe combine it with other recipes. If you’re a real chef, you’ll invent your own recipe. I’d like to hear about the practice you ultimately end up with.

You may read this recipe and realize you already do different things that give you the same benefit. I’d like to hear about those different things.

And, if you work for a company where it’s not so important to look at actual effort and outcome, I’d like to hear about that too.

This is a more detailed description of the practice described in my contribution to: 97 Things Every Scrum Practitioner Should Know: Collective Wisdom from the Experts.

I’d love to hear what you think or do differently after reading this.

You may have noticed there’s no way for you to comment on this article. And you may be motivated to do so… even if it’s just to point out a grammatical or spelling error (which I can never really seem to get rid of).

You should say something about the article on twitter. Tweet at me: @jeffpatton

You could also sent email to me: jeff (at) jpattonassociates (dot) com